Unlock the Power of Kafka for Seamless Integration

Revolutionize Your API and Cloud Development with Kafka

Discover how Kafka can transform your data streaming capabilities, enhancing efficiency and scalability in your API and cloud projects.

The Role of Kafka in Modern Development

Understanding Kafka's Impact on API and Cloud Solutions

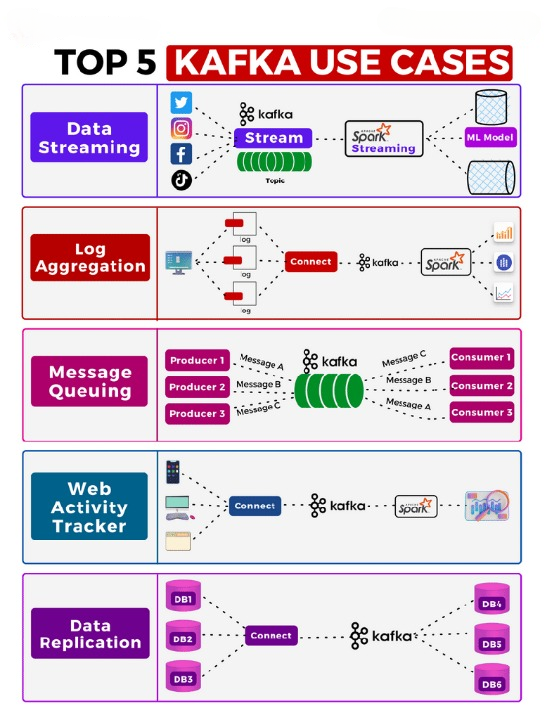

Kafka is a pivotal technology in the realm of data streaming, offering robust solutions for real-time data processing and integration. Its ability to handle vast amounts of data with low latency makes it indispensable for modern API and cloud development. By facilitating seamless data flow between systems, Kafka empowers businesses to build scalable, resilient, and efficient architectures. As organizations increasingly rely on cloud-based solutions, Kafka’s role in ensuring data consistency and availability becomes even more critical. Its versatility and performance capabilities make it a cornerstone for digital transformation initiatives, enabling developers to create responsive and adaptive applications that meet the demands of today’s dynamic digital landscape.

With Kafka, developers can streamline data pipelines, reduce complexity, and enhance the reliability of their systems. Its distributed architecture supports a wide range of use cases, from real-time analytics to event-driven microservices, making it a key enabler of innovation in the tech industry. By leveraging Kafka, businesses can achieve faster time-to-market, improve customer experiences, and gain a competitive edge in an increasingly data-driven world.

Essential Kafka Configurations for Optimal Performance

Heap Size Management

Configure the Kafka broker’s heap size to optimize memory usage and ensure smooth operations. Proper heap size settings prevent out-of-memory errors and enhance system stability.

Log Retention Strategies

Adjust log retention settings to balance storage costs and data availability. Tailor retention periods to your specific use case to maintain the right amount of historical data.

Maximize Message Throughput

Fine-tune message size and batch settings to boost throughput. Larger batch sizes can improve performance, while careful configuration of message size ensures efficient data handling.

Enhancing Kafka Performance

Optimizing Kafka performance involves fine-tuning both producers and consumers to ensure maximum throughput and minimal latency. By carefully adjusting configurations such as batch size, linger.ms, and acks, you can significantly enhance the efficiency of your Kafka deployment.

For producers, batching data effectively and setting appropriate linger.ms values can lead to improved throughput. Ensuring that the max.in.flight.requests.per.connection is set correctly can also help in balancing throughput and message ordering.

On the consumer side, aligning the number of consumers with the number of partitions is crucial. This alignment ensures that all partitions are processed efficiently, preventing bottlenecks and maximizing data processing speed.

Configuring Kafka for Optimal Performance

Step 1

Step 1: Set the Kafka broker’s heap size by exporting KAFKA_HEAP_OPTS in your environment to ensure efficient memory usage.

Step 2

Step 2: Adjust the log.retention.hours parameter to manage message retention based on your use case, typically set to 72 hours.

Step 3

Step 3: Configure message.max.bytes and replica.fetch.max.bytes to control message size and ensure data integrity across replicas.

Enhance Your Kafka Experience

Unlock the full potential of your Kafka deployment with APILabs. Our team of experts is ready to guide you through every step of implementation and optimization. Reach out today to ensure your system is running at peak performance.